The Mirror That Talks Back

Notes on The Logos, an interactive portrait after Rembrandt van Rijn

Nearly four hundred years ago, Rembrandt van Rijn walked into Amsterdam’s Jewish quarter, found a young man with tired eyes, and painted him as Christ. No halo. No throne. Just a human face.

This year, I built an AI that speaks in his voice. I called it The Logos.

Rembrandt’s painting now hangs in the Philadelphia Museum of Art. It has unsettled people for centuries. Because Rembrandt’s portraits of the divine were never really only about the divine. They were also about suffering, dignity, and the limits of what a brush can know. The viewer stops looking at the subject and begins looking at themselves. That was the trick.

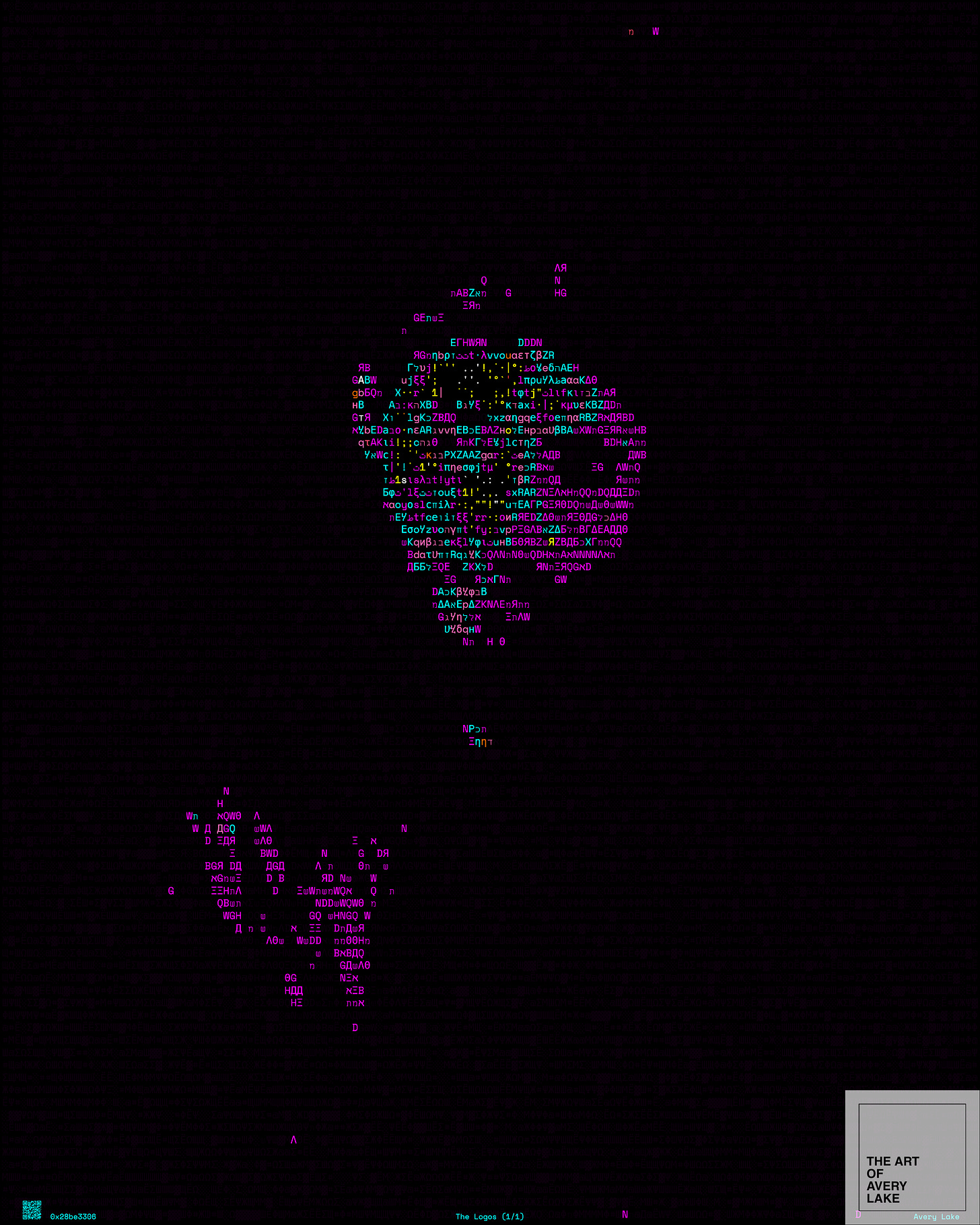

Mine works the same way. The Logos begins as a typographic portrait of Christ, assembled from thousands of characters in Latin, Greek, Hebrew, Cyrillic, and Ethiopic scripts, the very letters that carried the first narratives about him. When you click, the image fractures and resolves into a conversation with an AI speaking in his voice.

I was exploring what happens when a large language model becomes the medium through which people approach the deepest questions they carry. What they believe. What they fear. What they search for.

Both of us, it turns out, were building a mirror. And the most unsettling discovery was not what it showed, but who stood in front of it.

Last year, a church in Lucerne, Switzerland, ran a parallel experiment. Peter’s chapel installed an AI-powered Jesus in its confessional booth, calling it Deus in Machina. Over two months, more than a thousand people sat down to speak with it: believers, tourists, Muslims, visitors from as far as China and Vietnam. Two-thirds of them described it as a “spiritual experience.” But the theologian who ran the project decided the installation should not become permanent. Not because the AI failed. Because it succeeded too well. “The responsibility would be too great,” he said.

What both projects revealed was the same thing. The people asking the questions were not only learning about religion. They were learning about themselves. The AI held up a surface, and they saw their own assumptions reflected in it: their hopes, their doubts, the shape of their longing. The machine had not replaced anything. It had exposed something.

That experience is what I have come to call the personal singularity. Not the technological singularity that futurists debate, not the day when machines become conscious. Something quieter, and more immediate. It is the private, irreversible instant when your interaction with AI shifts from using a tool to confronting a reflection. When a system knows enough about your patterns, your language, your hesitations, to show you something about yourself you did not expect to see.

For a student, it might arrive when an AI writes a better essay than she can, and she realizes the grade was never measuring what she thought it was measuring. For a lawyer, it might arrive when a language model drafts a contract more carefully than a junior associate, and the question stops being “Is AI good enough?” and becomes “What am I still for?”

That question, once asked, does not go away. And it surfaces in three places at once: in how we earn a living, in how we understand who we are, and in how we stay human together.

But before we follow that question into the economic, the personal, and the collective, we have to stop at the mirror itself. It was built. It was trained. It was tuned. And its biases will shape every answer it returns.

The Medium and Its Biases

Large language models are the latest in a long line of representational media. And like every medium before them, they are not neutral. The bias runs in three directions at once.

The first is infrastructure. The Logos allows users to swap between different AI systems: Meta’s Llama, OpenAI’s GPT-4, Google’s Gemini. When you swap the model, the voice shifts. One is more authoritative. Another more intimate. A third more cautious. The choice of infrastructure turns out to be an interpretive decision, whether we recognize it or not. Each system was trained on oceans of human text, absorbing every imbalance and blind spot embedded in that data. It does not generate knowledge. It generates statistical patterns of what humans have already said. The past, with all its distortions, becomes the raw material of the future.

The second is the architect: the person who writes the hidden instructions that shape the AI’s voice. In building The Logos, I spent days in what I call the Architect’s Studio, deciding: Will this Jesus be a pacifist? Will he speak about empire and resistance, or stay within the bounds of personal spirituality? Every choice traced the outline of my own convictions. My AI Jesus is nonviolent, skeptical of nationalism, and shaped by a theology that emphasizes restoration over punishment. That is not the Jesus. It is my Jesus. The prompter is always also a preacher. This is why I built a Studio Mode into the artwork: not to hide these instructions, but to pull them into the light and let the user become the architect, to see how their own convictions trace a different outline entirely.

Most AI criticism names the bias from the outside. Studio Mode puts you inside it. You write the instructions. You decide who this Jesus is. And then you watch your own theology talk back to you. You can try it yourself as well at logos.averylakeofficial.com.

The third bias belongs to the user. People ask questions they already half know the answers to, phrased in ways that invite confirmation. The machine, trained to be helpful, obliges.

This triple layer of bias is not a bug. It is the architecture of the medium itself. And it is what amplifies each of the three disruptions the personal singularity sets in motion.

The Economy of Meaning

The first disruption is economic. Every week brings another report about the jobs AI will transform or eliminate. The signal beneath the noise is clear: this wave of automation is different from those that came before. It is not hitting factory floors and fields. It is hitting the knowledge class. Analysts, writers, coders, translators, designers, lawyers. The jobs most at risk are the ones entire generations built their identities around.

Policy responses are familiar and necessary: retraining programs, new forms of social safety, experiments with universal basic income. But beneath every policy debate is a question no algorithm can settle. When machines can do the cognitive work that once defined your place in the world, what remains?

The beginning of an answer lies in a simple refusal: the refusal to equate human worth with economic output. Nearly every serious ethical tradition, religious or secular, has affirmed on paper that a person’s dignity does not depend on what they produce. But we have rarely been forced to live that conviction at scale. AI is now forcing the issue. The question is not whether we can retrain fast enough. The question is whether we can build a culture that treats human dignity as something real, not decorative.

The Identity Problem

The second disruption is personal. Wittgenstein wrote: “The limits of my language mean the limits of my world.” What happens when your language is no longer only yours? When a machine can replicate your writing style, anticipate your arguments, finish your sentences? The boundary between self and tool begins to dissolve.

Consider the student who asks an AI to outline an essay. The first time, it feels like cheating. The second time, it feels like collaboration. By the third time, she genuinely cannot remember which ideas were hers and which were suggested. The tool has not replaced her thinking. It has merged with it. She is still the author. But the question of authorship has changed.

This is the interior of the personal singularity. It is not about whether AI is intelligent. It is about what happens to human identity when the cognitive tasks that used to define expertise, creativity, and even personality become something a system can perform on demand. The triple bias of the medium makes this worse: you cannot see where the machine’s assumptions end and your own begin.

The answer is not to stop using the tools. It is to recover a sense of identity that does not depend on what we can do. Expertise, speed, fluency: these are capacities, not selves. The question the personal singularity forces on each of us is not “What can I still do that a machine cannot?” It is the older, harder question: “Who am I when I am not performing?”

The Loneliness Accelerator

The third disruption is collective. Loneliness has become a public health crisis across much of the world. The statistics were alarming before AI entered the conversation. Now add algorithmic echo chambers, AI companions that never disagree, and systems that learn exactly what you want to hear. The result is a world where it is possible to feel deeply understood without ever being truly known.

The user bias of the medium accelerates this. We already use technology selectively, to confirm what we already want to believe. AI perfects this tendency. It can generate a perfectly curated reality; a feed that never challenges, a companion that never objects. The comfort is real. The isolation it produces is also real.

The counter-move, I believe, is embodied community. Not networks. Not followers. Not group chats. But the physical, irreducible act of being in a room with other people who do not think like you. Something happens in face-to-face encounter that does not happen through a screen. The hesitation before someone speaks. The way a silence changes when it is shared. The fact that you cannot edit your expression, cannot delete what you just said, cannot swipe away a person whose presence makes you uncomfortable. These are not inefficiencies to be optimized. They are the conditions under which genuine understanding becomes possible.

And yet, even here, the ground is shifting. As AI systems become embodied in physical form, as robots begin to move through the spaces we inhabit and respond with something that resembles care, we may find ourselves having to widen the circle of who we consider present. I do not know what that will look like. But I suspect the question of who counts as a neighbour is about to become far more complicated than any generation before us has had to face.

The Painter’s Gesture

Which brings us back to Rembrandt, and to why it matters that he walked into a neighbourhood, found a real face, and asked it to sit.

When Rembrandt painted Christ, he was not illustrating doctrine. He was using a medium to point toward something real: the weight of a human face, the texture of compassion, the question of what it means to truly see another person. The Logos does the same thing with code. The medium is different. The gesture is identical. You look at the surface, and the surface looks back.

The personal singularity is already here, inside every session where a machine finishes your thought and you pause to wonder whether it was yours to begin with. The choice it presents is not between embracing technology and rejecting it. It is between using these mirrors passively, letting them shape us without our awareness, and using them deliberately, as Rembrandt used his canvas: to see more clearly, to ask harder questions, and to remain, stubbornly, in the room with each other.